The emergence of generative artificial intelligence has created unprecedented pressure on higher education institutions to respond rapidly to a technology that challenges established approaches to teaching, learning, and assessment. Yet despite this urgency, most institutions found themselves navigating uncharted territory with limited guidance. In 2024, a survey of higher education leaders by Inside Higher Ed indicated that while approximately 90% of higher education institutions had AI initiatives underway, only 20% had published clear policies to guide faculty and students (AAUP, 2025). That gap between institutional activity and coherent guidance created confusion, inhibiting meaningful adoption and leaving faculty and staff to navigate AI integration without clear direction. The confusion was compounded by the rapid pace of technological change: tools that seemed cutting-edge six months earlier might be quickly superseded, while institutional policy processes move at a pace ill-suited to such volatility. For faculty members already stretched thin by teaching, research, and service obligations, the expectation that they would independently develop AI literacy while simultaneously maintaining their existing responsibilities could feel overwhelming rather than empowering.

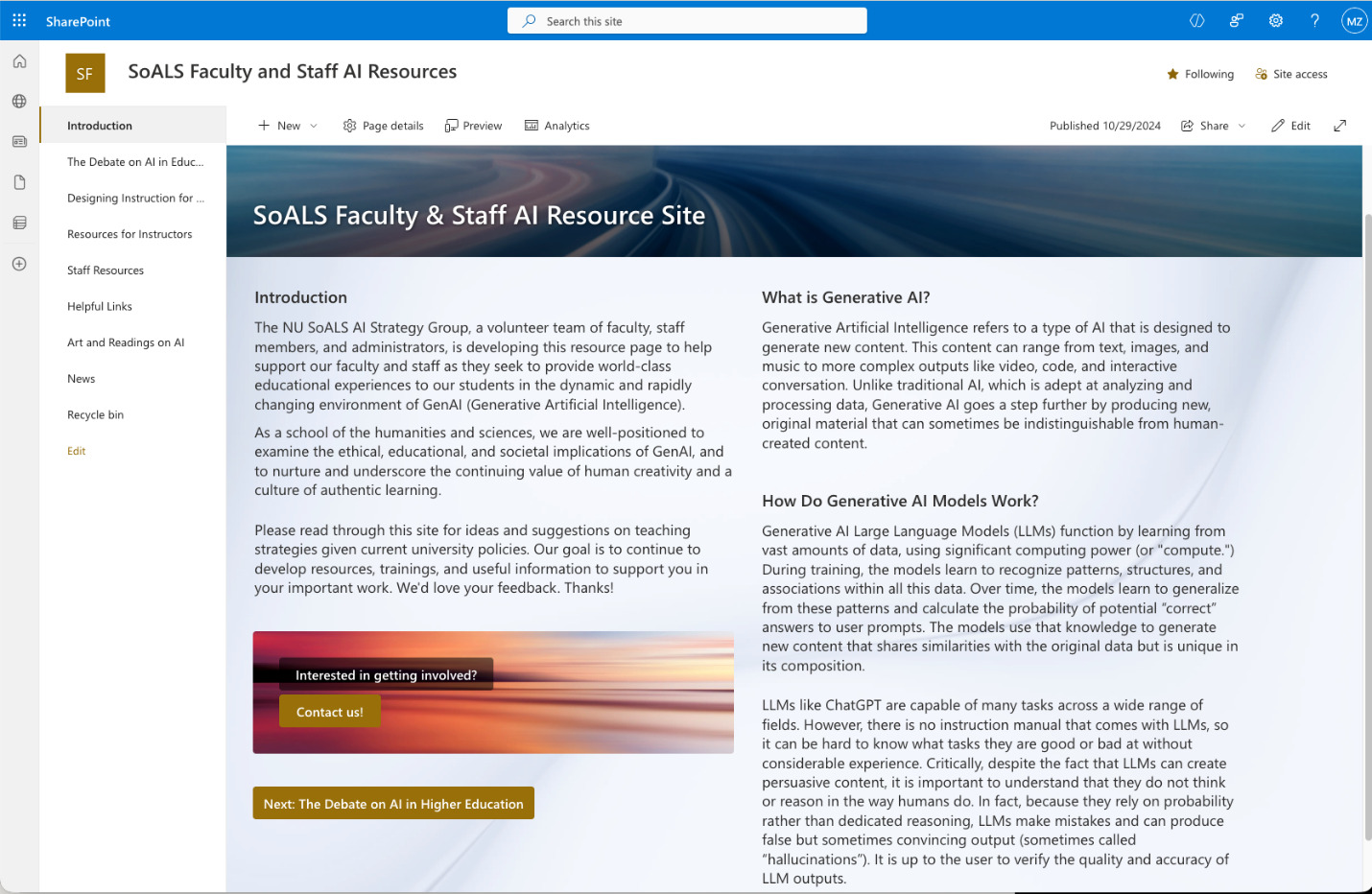

This narrative chronicles one school’s response to this challenge: the development of the SoALS Faculty and Staff AI Resources site at the School of Arts, Letters, and Sciences (SoALS) at National University (NU). What began as an NU Cause Research Institute Seed Grant project to study the feasibility of AI agents for student academic advisement evolved into something quite different when community engagement revealed a more fundamental need. As documented in the project’s final report, informal conversations with faculty, staff, and students surfaced a consistent theme: “faculty and staff needed support and training on generative AI and its implications—period” (Zimmer, 2025). Driven by the rapid evolution of the technology and its disruptive potential, this realization led to a strategic pivot. Rather than studying whether AI tools might eventually support student advisement, the project addressed what constituents said they needed: foundational resources, honest discussion of opportunities and challenges, and a space for the kind of critical reflection that characterizes liberal arts inquiry.

This practitioner account addresses three interconnected questions that emerged from the project experience and connect to broader patterns in the technology adoption literature. First, how can institutions build the foundation of trust and competence necessary for meaningful AI adoption, particularly when the technology itself remains poorly understood and rapidly evolving? Second, what distinguishes participatory, community-driven approaches to technology implementation from top-down mandates, and why does this distinction matter? Third, what unique contributions do liberal arts perspectives bring to AI integration in higher education, and how might humanities-centered approaches offer resources that purely technical framings lack? In exploring these questions, the article proceeds by first describing the SoALS AI Resources site and its development, then analyzing how its key features align with research-based principles of technology adoption, trust-building, and participatory implementation, and finally drawing implications for other institutions navigating similar challenges.

The SoALS AI Resources Site: Development and Design

The strategic pivot that shaped the SoALS AI Resources site illustrates a broader principle about successful technology implementation: meeting people where they are rather than where planners imagine they should be. The original CRI Seed Grant proposal I submitted in 2024 aimed to develop a school-wide feasibility study about the use of custom AI agents or other tools to support student advisement and success. The rationale was compelling on its face: even with National University’s current levels of support, first-generation and working adult students can struggle with navigating the online student experience, and AI-powered tools might help level the playing field. However, a series of informal conversations with SoALS full-time and part-time faculty, staff, and students revealed that my proposal presented what seemed to be a classic case of putting the cart before the horse. Given that decisions on institutional AI use and partnerships at our university reside above the school level, and more fundamentally, that the community lacked a shared understanding of generative AI’s capabilities and limitations, the proposed feasibility study seemed premature. Faculty could not meaningfully evaluate AI advisement tools when many had not yet developed basic familiarity with how large language models work, what they could and could not do reliably, and how they might affect teaching and assessment.

This recognition led to a change in direction that leveraged the knowledge and ideas of the SoALS AI Strategy Group, a volunteer team of faculty, staff members, and administrators who collaboratively developed what would become the AI Resources site. Rather than a top-down initiative driven by administration or a technical team, the group brought together people with diverse disciplinary backgrounds, institutional roles, and perspectives on AI. Some members approached AI with enthusiasm for its pedagogical possibilities; others harbored significant concerns about academic integrity, labor displacement, or the homogenization of student writing. Instead of suppressing these tensions, the group’s discussions modeled the kind of multivocal engagement the site would eventually encourage. The resulting resource reflected this diversity of perspective, presenting AI neither as an unambiguous good to be embraced nor as a threat to be resisted, but as a complex phenomenon requiring ongoing critical engagement. The site was conceived from the outset as a living document designed to evolve with the fast-moving technological landscape, regularly updated to reflect new developments, shifting policies, and emerging resources.

The site’s architecture reflects several deliberate design choices that distinguish it from generic AI resource collections. The Introduction page establishes the school’s distinctive positioning as a school of the humanities and sciences, “to examine the ethical, educational, and societal implications of GenAI, and to nurture and underscore the continuing value of human creativity and a culture of authentic learning” (SoALS AI Resources site). This framing situates AI engagement within the school’s existing mission and values rather than treating it as an external technical matter to be managed. The page also includes an invitation for community involvement, signaling that the site represents an ongoing conversation rather than a finished product handed down from above. Subsequent pages provide practical resources for instructors and staff, curated external links to high-quality scholarship and tools, current news developments, and policy guidance, including honest assessments of AI detection tool limitations. However, two sections particularly embody the site’s distinctive approach: the Debate on AI in Education page and the Art and Readings on AI page, each of which merits closer examination for what it reveals about liberal arts engagement with technology.

Creating Space for Debate: Trust Through Transparency

The Debate on AI in Education page reflects the vigorous internal discussions that shaped the project and models an approach to technology engagement grounded in intellectual honesty rather than promotional advocacy. Rather than presenting a unified institutional position, the page organizes perspectives around “The Pros” and “The Cons,” acknowledging that reasonable people examining the same evidence may reach different conclusions. This design choice aligns with research on trust in technology implementation, which consistently identifies transparency as foundational to building the institutional credibility that enables adoption. The Mayer et al. (1995) influential model defined organizational trust as the willingness to be vulnerable based on positive expectations of another party and identified three components of trustworthiness: ability, the competence to deliver on promises; benevolence, a genuine orientation toward the trustor’s interests; and integrity, adherence to principles the trustor finds acceptable. Research suggests that this trust-building is central to technology adoption and the success or failure of digital transformation; indeed, McKinsey & Company (2019) research indicates that 70% of such transformation initiatives fail, with the vast majority of failures attributed to cultural and organizational factors, particularly employee resistance, rather than technical shortcomings. These numbers suggest that institutions treating human dimensions as secondary concerns, to be addressed after technical implementation is complete, are likely to join many failed transformations regardless of how sophisticated their technologies may be. The Debate page attempts to demonstrate integrity by refusing to oversimplify a genuinely complex situation, an approach that may seem counterintuitive when institutions often feel pressure to project confidence and clarity.

Emphasizing ongoing discourse rather than settled conclusions also reflects what the technology adoption literature identifies as a critical distinction between initial adoption and sustained engagement. Karahanna et al. (1999) found in their influential study that potential technology adopter intention is determined primarily by normative pressures, what others think one should do, while continuing user intention is determined by attitude, one’s own assessment of value. Organizations can achieve initial adoption through mandates or social pressure, but sustained meaningful use tends to require that individuals develop their own positive relationship with the technology. By providing at least one small avenue for faculty to form their own informed judgments rather than simply being told what to conclude, the Debate page, in combination with the evolving set of curated resources across the site, positions it as a support for ongoing reflection rather than a delivery mechanism for predetermined institutional messages. In creating room for deliberation, the site tries to treat faculty and staff as professionals capable of wrestling with complexity rather than as passive recipients of administrative guidance. The results of this effort will need further, systematic review over time to test whether the approach has yielded intended outcomes.

Liberal Arts Perspectives: The Art and Readings Section

Another distinctive element of the SoALS site is the Art and Readings on AI page, which brings a humanities lens to AI engagement that is notably absent from many technology resource collections. The page’s rationale reflects liberal arts values explicitly: “In SoALS, we appreciate art and literature for a variety of reasons, from the enjoyment they provide to the critical thinking and empathy they develop to their ability to prompt us to examine our lives and the world around us. In times of uncertainty and change, art and literature serve as an arena for reflection on, and a response to, technological and social changes.” Artistic and literary engagement in this case exist not as peripheral enrichment but as a legitimate methodology for understanding technological transformation. The page is organized into two categories—Artistic and Literary Reflections on AI and Readings on Human Relationships with AI—suggesting that understanding AI requires not only technical knowledge but also the interpretive and reflective capacities that humanities disciplines cultivate. By including this section, the site implicitly argues that the question of how to respond to AI is not merely a technical or policy matter but a humanistic one, requiring the kind of meaning-making that has always been central to liberal arts inquiry.

This humanities-centered approach addresses a gap in both the technology adoption literature and in institutional AI responses more broadly. Research on AI adoption in higher education has focused predominantly on STEM contexts, leaving humanities faculty perspectives underexamined. Contrary to assumptions that humanities faculty resist technology, Baytas and Ruediger’s (2025) research involving 246 interviews across 19 institutions found that humanities faculty demonstrated medium to high AI familiarity, often exceeding expectations, driven specifically by concerns about student writing and academic integrity. Humanities instructors were more likely than STEM or social science colleagues to access university-provided AI support resources (Baytas & Ruediger, 2025), suggesting less technophobia and perhaps a distinctive engagement shaped by disciplinary concerns. Indeed, humanities principles and perspectives may also bring unique value to AI implementation, whether with critical evaluation of AI outputs through close reading skills and analysis of rhetorical strategies; ethical frameworks drawing on philosophy, religious studies, and moral reasoning traditions; cultural contextualization examining whose knowledge AI systems encode and reproduce; and questions of meaning that resist purely instrumental framings of technology adoption. The Art and Readings page begins to operationalize these potential contributions by modeling the interpretive, reflective engagement that humanities disciplines tend to practice.

The inclusion of artistic and literary resources also reflects an understanding that technology adoption is never purely rational but involves affective, imaginative, and cultural dimensions that technical training alone cannot address. When faculty encounter AI, they bring not only questions about functionality and policy but also anxieties about professional identity, concerns about what authentic education means, and hopes or fears shaped by cultural narratives about artificial intelligence. Science fiction, visual art, and philosophical reflection engage these dimensions in ways that user manuals and policy documents cannot. The page’s design suggests that processing the advent of generative AI requires not only learning how to use tools but also developing frameworks for understanding what these tools mean—for education, for human creativity, for the value of the skills faculty have spent careers cultivating. By creating space for this kind of reflection, the site acknowledges that AI adoption involves identity work and meaning-making, not only skill acquisition. This humanities-informed approach may prove particularly valuable for faculty who experience AI not as a neutral tool but as a challenge to deeply held values about teaching, learning, and the purpose of education itself. Over time, further analysis and examination will be needed to understand the impacts of this approach.

Participatory Development: Community-Driven Implementation

The process through which the SoALS AI Resources site was developed exemplifies what the technology adoption literature identifies as participatory implementation, an approach that consistently outperforms top-down mandates in achieving sustained engagement (Reichheld et al., 2025). The project’s willingness to pivot based on community feedback demonstrates a commitment to constituent-driven rather than technology-driven development. When informal conversations revealed that the originally proposed feasibility study put the cart before the horse, this feedback could have been dismissed as resistance to be overcome or the project could have proceeded with the original plan while adding educational components as a concession. Instead, the feedback fundamentally reshaped the project’s direction, and the wisdom of the CRI Seed Grant program and team was deeply evident in its flexibility and supportiveness. That made possible the project’s responsiveness, which ended up reflecting what scholars like De Cremer (2024) have argued about AI adoption: systems developed without employee input are more likely to provoke resistance and fail to achieve intended outcomes. Our final project report for the resource site explicitly noted the importance of “listening to what constituents actually wanted (rather than push[ing] an agenda about what they should want),” framing responsiveness not as a compromise of the original vision but as fidelity to the project’s deeper purpose of serving the National University community.

Research suggests the distinction between participatory and top-down implementation proves particularly consequential for AI adoption in particular because of the nature of generative AI as a general purpose technology that can be used in a wide variety of ways, and often most effectively by those who are closest to the use cases that will be relevant (Iansiti & Nadella, 2022; Sampath, 2024). As EVP and CEO of Verizon Consumer, Sowmyanarayan Sampath (2024) has argued in Harvard Business Review, organizations should “let innovation happen on the frontline and support it,” and “give teams ownership of the process.” In an academic context, AI presents many different opportunities and challenges, whether through potentially positive developments like personalized learning, custom chatbots, and AI-powered simulations, versus the difficulties of ensuring academic integrity in conventional assignments like asynchronous discussion board posts or essays, as well as the challenge of validly assessing student learning in an AI-powered environment. Indeed, as Iansiti and Nadella suggest, a participatory approach helps to bridge across functional silos, and when combined with training and empowerment of frontline employees, drives the “intensity and impact of transformation” on “a range of innovation initiatives” (2022).

The SoALS AI Strategy Group’s collaborative structure operationalized participatory principles in concrete ways. The group brought together faculty, staff, and administrators with diverse perspectives, creating a governance structure that could surface tensions and negotiate differences rather than imposing a single viewpoint. Presentations to multiple faculty meetings—both full-time and part-time—extended participation beyond the core group, building awareness while inviting ongoing input. The site’s invitation for community contributions signals that development remains open rather than concluded. This approach aligns with Baytas and Ruediger’s (2025) finding that peer-to-peer learning is among the most valued support mechanisms for faculty AI engagement—faculty trust guidance from colleagues who have navigated similar challenges over institutional mandates or external consultants. By positioning the site as a community resource developed by and for the SoALS community, rather than as an administrative initiative or an external product, the project created conditions favorable to engendering the trust and ownership that enable meaningful adoption. The site’s subsequent use as a model for the AI Council’s university-wide page suggests that bottom-up innovation can influence institutional direction, not merely respond to it. It is important to note, however, that the impact of these efforts requires further investigation, even as emulation from other university organizations like the AI Council anecdotally suggest a valuable approach.

While the “Debate” and “Art and Readings” sections embody the site’s distinctive liberal arts approach, the practical resource pages address immediate needs that faculty and staff articulated through the community engagement process. The Resources for Instructors page provides policy guidance, including university policies and procedures, guidance on AI detection tools and their critical limitations, and AI syllabus policy examples. A significant element is the site’s honest assessment of detection tool reliability: “It is National University’s current policy that AI detection software is not sufficient, by itself, to determine if a student has used generative AI for an assignment, and a positive result from AI detection software cannot be the only rationale for a lowered or failing grade.” This transparency about limitations exemplifies the integrity dimension of trust-building identified by Mayer and colleagues (1995); rather than offering false reassurance about tools that might detect AI use, the site acknowledges genuine uncertainty and cautions against over-reliance on imperfect solutions. Such honesty may initially feel uncomfortable, but tries to build the credibility that sustains trust when people discover that the complications acknowledged upfront are real.

The Helpful Links page curates high-quality external resources, including Ethan Mollick’s influential “One Useful Thing” blog, Harvard’s metaLAB AI Pedagogy Project, the WAC Clearinghouse TextGenEd collection, and the Critical AI journal from Duke University Press, among others. This curation reflects a recognition that no single institution can develop all necessary resources internally and that connecting faculty to the broader scholarly conversation strengthens rather than weakens institutional efforts. Meanwhile, the News section positions the site as a living resource that engages with ongoing developments, featuring pieces on higher education challenges, critical perspectives, and research on AI’s impacts. This acknowledges the rapid pace of change that characterizes the current AI landscape: resources that were current at publication may become outdated within months, and faculty need ongoing connection to emerging developments rather than a snapshot frozen in time. The site’s conception as a “living document” addresses this reality, though it also creates ongoing demands for maintenance and updating that extend well beyond initial development. By providing concrete examples of AI applications, policy templates, and curated pathways to external expertise, the SoALS site tries to reduce barriers to engagement while also attempting to build the self-efficacy that research suggests can facilitate adoption. The site’s multiple entry points, from basic explanations of how generative AI works to advanced discussions of pedagogical transformation, endeavor to allow users at different levels of familiarity to engage productively, hoping to avoid both the condescension of assuming everyone is a beginner and the exclusion of assuming everyone already possesses technical sophistication.

Conclusion

While still a work in progress that requires systematic investigation to document material impacts, the development of the SoALS Faculty and Staff AI Resources site illustrates both potential challenges and possibilities in building institutional capacity for AI engagement during a period of rapid technological change and widespread uncertainty. What began as a feasibility study for AI-based student advisement evolved through community engagement into a project attempting to address the more immediate need for AI literacy, critical reflection, and practical support. The project’s willingness to pivot based on constituent feedback, rather than proceeding with an original plan that community members identified as premature, reflects the responsive, participatory approach that research consistently finds more effective than top-down mandates in achieving sustained, meaningful engagement (De Cremer, 2024; Iansiti & Nadella, 2022). That research also suggests organizations may achieve initial adoption through pressure and mandates, but that the genuine integration that transforms practice requires that people develop their own positive relationship with new technologies. This tends to be a relationship that depends on trust, respect for professional judgment, and space for the kind of ongoing sense-making that the site aspires to support.

Ultimately, the SoALS Strategy Group sought to leverage a fundamental insight from the technology adoption literature: success tends to depend on attending to human dimensions often more than on technical sophistication, and the 70% failure rate of digital transformations cited by McKinsey & Company (2019) reflects not technology deficiencies but failures to attend to training that builds genuine competence, trust, and participation that provides meaningful voice. The SoALS AI Resources site represents one attempt to operationalize these principles in the context of a liberal arts school responding to generative AI, not the only possible model, and certainly not a model without limitations and ongoing challenges. More work is needed to determine and verify the impacts of this specific project. Over time and as AI continues to evolve, further research and analysis will be needed to demonstrate that organizations that have attempted to build the foundations of trust, competence, and community are better positioned to adapt.

Acknowledgment

I would like to acknowledge the support and leadership of Dean Nicole Polen-Petit and Associate Dean Julie Wilhelm of National University’s School of Arts, Letters, & Sciences (SoALS), as well as the wisdom, support, and thoughtfulness of the National University’s Cause Research Institute team, including Heather Hussey and Athena McGhan, and the JOGE editorial team. In addition, this project would not have been possible without the excellent work of the SoALS AI Strategy Group on our resource site and AI initiatives: Karen Goldman, Julie Wilhelm, John Miller, Fede Forniciari, Allyson Washburn, Huda Makhluf, Jacqueline Ruiz, Colin Dickey, Lorna Zukas, Frank Montesonti, Shelley Rees, Laine Goldman and Larraby Fellows.

Disclosure of Interest

In addition to my academic position, I am a co-founder and managing partner of a firm that consults with employee-owned companies, nonprofits, and other mission-driven organizations on AI adoption.

AI Statement

I used Claude Opus 4.5, Perplexity Pro, and Gemini 3 Pro for assistance with research, synthesizing content, reviewing writing, and formatting citations. All errors are my own.