Objectives

-

Develop and test a GenAI tool that can offer graduate students personalized learning experiences

-

Explore students’ user experience of the tool

-

Examine students’ perceptions of the tool

Literature Review

The Technological Pedagogical Content Knowledge (TPACK) framework served as the theoretical foundation for this research. Developed by Mishra and Koehler (2006), the framework serves as a comprehensive theoretical lens for understanding the expertise educators need to successfully integrate technology into their teaching. The founding principles of the framework build upon Shulman’s (1986) concept of Pedagogical Content Knowledge (PCK), which contends that effective teaching requires more than just subject expertise; it requires an understanding of how to make that subject matter comprehensible through specific instructional strategies. Mishra and Koehler extended this by adding Technological Knowledge (TK) as an important pillar. The core of the framework is the “dynamic equilibrium” created when three primary knowledge domains [i.e., Content Knowledge (CK), Pedagogical Knowledge (PK), and Technological Knowledge (TK)] intersect to enhance understanding. Central to the TPACK framework is the belief that technology should not be an “add-on” but must be purposefully synthesized with specific teaching methods and subject matter to enhance student learning within a unique educational context (Mishra & Koehler, 2006). The TPACK framework was applicable to my research because it underscores the importance of combining teaching expertise and knowledge with GenAI technology to maximize graduate student research development experiences.

The increasing complexity of modern higher education environments necessitates innovative approaches to curriculum design and delivery, particularly in graduate programs (Bobula, 2024; Johnson et al., 2024; Kapterev, 2023; Miller, 2024; Xia et al., 2024). Due to the rapidly changing landscape of AI and the emergence of new GenAI technologies, the way in which students research and acquire knowledge has changed (Bobula, 2024; Kapterev, 2023; Walczak & Cellary, 2023). One significant ongoing social problem is the challenge of effectively engaging adult learners in online learning, which often results in suboptimal learning outcomes and reduced academic performance (Chergarova et al., 2023; Johnson et al., 2024; Sekwatlakwatla & Malele, 2023). This issue is exacerbated in specialized tasks as research development where students may struggle with complex concepts without effective instructional tools. The need for interactive and engaging tools is crucial for enhancing students’ grasp of research methodologies and development, which are essential for their academic and professional success (Robles & Ek, 2024; Ruiz-Rojas et al., 2024; Xia et al., 2024). Existing literature reveals a notable gap in research focusing on the development and evaluation of AI-enhanced learning tools specifically designed for graduate-level research development. While there have been recent studies on general e-learning tools (Farrelly & Baker, 2023; Johnson et al., 2024; Kapterev, 2023; Lee & Patel, 2024), less attention has been paid as to how advanced AI algorithms can be used to create tailored, interactive learning experiences for graduate students, particularly adult learners (Baidoo-Anu et al., 2024; Swaak, 2024). Additionally, studies on the integration of AI in research development often lack a focus on validating these tools through expert reviews and feedback from students (Bobula, 2024; Lee & Patel, 2024).

Limited research has been conducted on the use of GenAI in higher education, particularly regarding the potential to engage adult learners in more effective experiences (Baidoo-Anu et al., 2024; de la Torre & Baldeon-Calisto, 2024; Johnson et al., 2024; Miller, 2024; Swaak, 2024). The study will contribute to the literature by offering insights into how AI-driven tools can be optimized for graduate education and research development, thus filling a crucial void in research on advanced instructional design and its impact on adult learner outcomes. This may provide valuable guidance to enhance online learning environments in higher education.

Methods

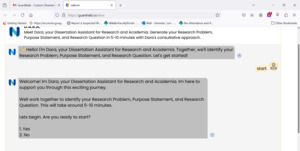

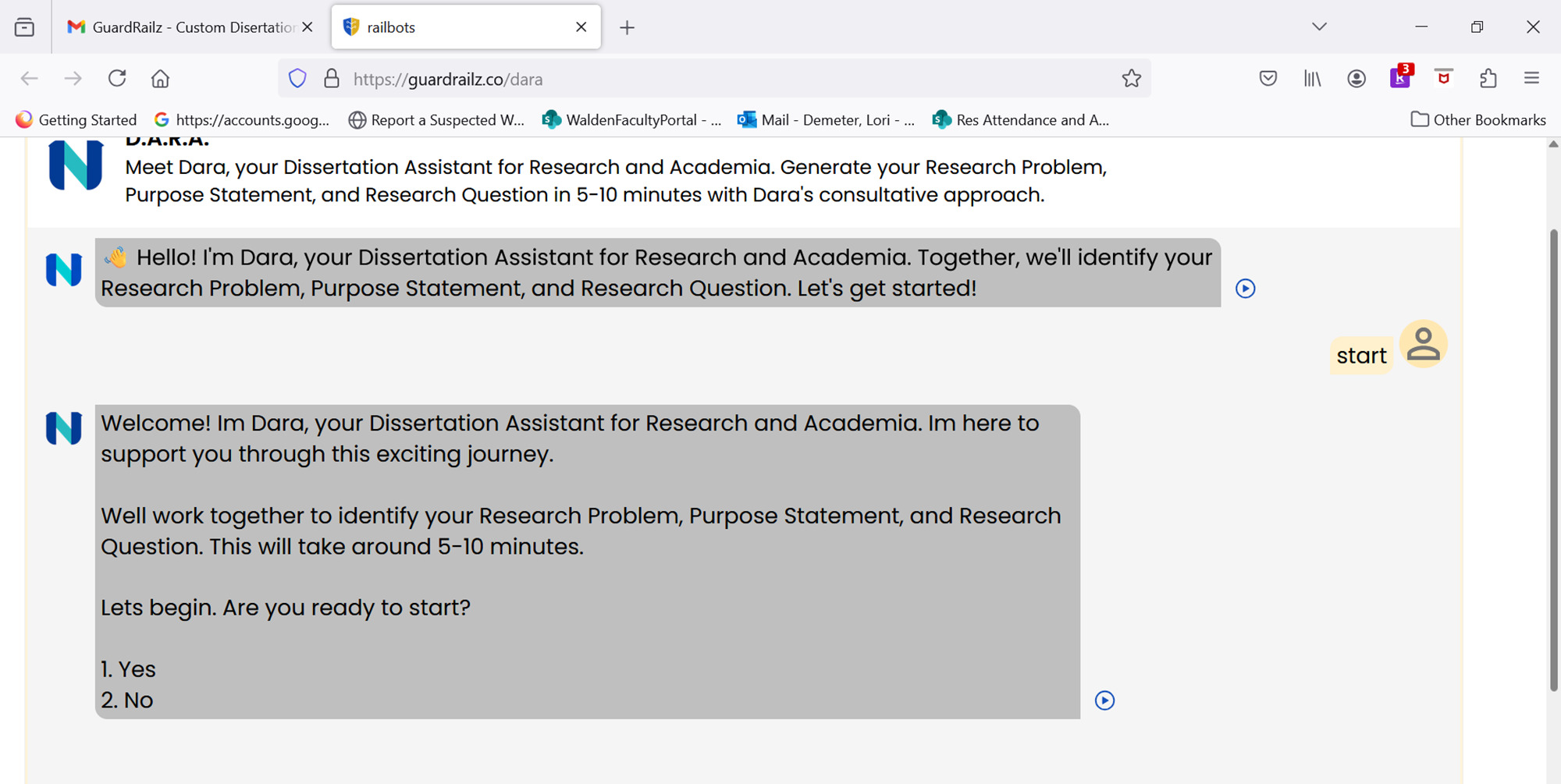

An exploratory study using a semi-structured survey data collection tool was utilized to enable rich, in-depth information not available using a quantitative method. A 10-15 minute learning module was developed by the researcher and hosted by a private contractor. The research module was tested by two experts to maximize validity, and minor revisions were made based on expert feedback. At the end of the module, students were invited via link to complete a brief (5-10 minute) survey on their experiences and perspectives.

Recruitment occurred via an email sent to a list of current CMP course enrollees forwarded to the researcher by COLPS leadership. The CMP course was used because it is a major milestone course in which many students develop their research ideas. The email included a direct link to the module that an interested student could click on if interested in participating in the study. Informed consent was provided by clicking on the first page of the module before students could proceed to begin the module itself. Data collection occurred from August through October 2024. Qualtrics was used for data collection and analysis (thematic development).

Analysis

A total of 41 graduate students completed the DARA module and survey. This sample included 24 Doctoral Criminal Justice (DCJ) students and 17 Doctoral Public Administration (DPA) students (see Table 1).

Of those 41 participants, over two-thirds (68%) were aged 18-35, with almost half (19, or 46%) of all participants being aged 18-25 (Table 2).

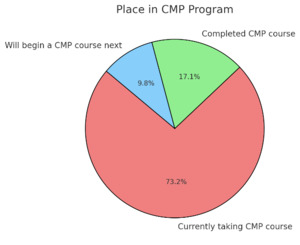

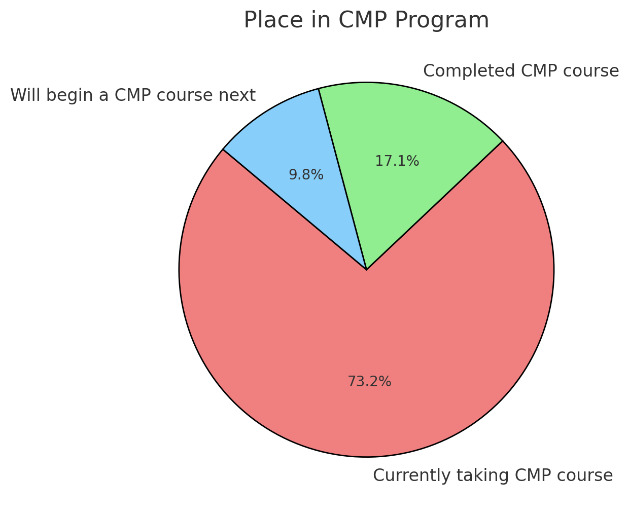

Regarding their place in respective programs, the majority of students (30 of 41, or 73%) were currently taking the CMP course, while seven students (17%) had completed the CMP course, and four students (10%) will begin the CMP course within the next three months (Figure 1).

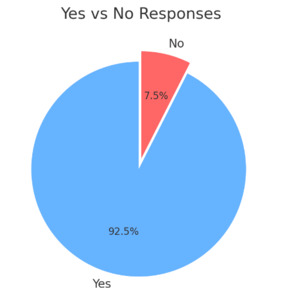

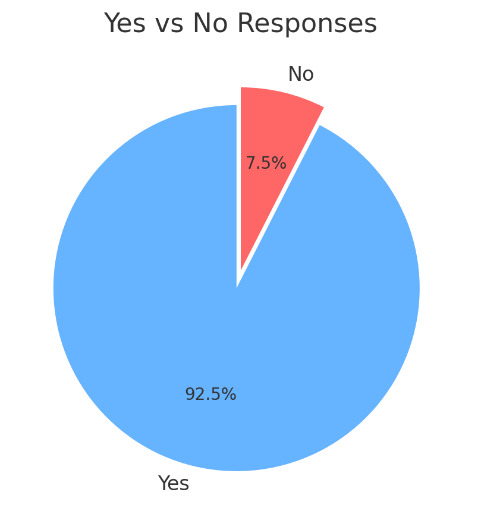

Regarding the usefulness of the DARA tool, the vast majority students (37 of 41, or 90%) indicated that the module helped them in developing and/or aligning their problem, purpose, and research question[s] (Figure 2).

Regarding the most helpful aspect of the DARA tool, the majority of students (26 of 36, or 72%) indicated that assistance in developing their own research problem. Eight students (8 of 36, or 22%) reported that providing examples as to how the research problem, purpose statement, and research questions can be aligned was most useful, and two students (6%) stated that developing the purpose statement was most helpful. Five students declined to answer the question.

Students were then asked about the ease of use of the DARA tool itself, and they could select more than one response. Results conveyed that overall students considered the module was easy to understand and use. Thirty-five students indicated that the module was easy to understand, and 36 stated the module was easy to complete. Only three students reported that the module was difficult to understand, and one indicated it was difficult to complete (see Figure 4).

Students were asked, “What are your thoughts specifically regarding use of DARA tool in comparison to traditional faculty feedback?” Of the 36 that responded to the question, a strong majority of students (86%) indicated that use of the DARA tool was more useful than traditional faculty feedback. Eight students indicated that the tool was significantly more helpful than traditional faculty feedback, while 23 students indicated the tool was slightly more useful. Among the reasons cited were that the feedback was almost instantaneous, the tool allowed for several iterations of wording components as problem and purpose, and there was no perceived judgement on the quality of components, so the student felt more at ease working through the research problems.

Four students indicated that the DARA tool was equally helpful to traditional faculty feedback, while one student indicated the tool was slightly less helpful than traditional faculty feedback. Four students indicated that they preferred not to answer that question.

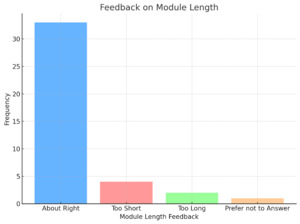

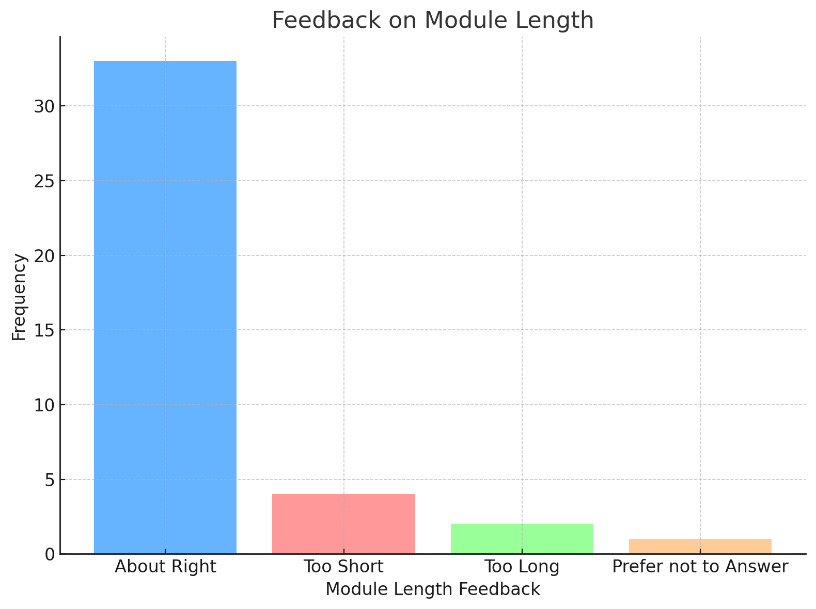

Students were then asked to provide thoughts regarding the module’s length. Most students indicated that the module’s length was about right (33 of 40, or 83%). Four students stated the modules length was too short, while two students reported it was too long. One student indicated they preferred not to answer, while one student left the question blank.

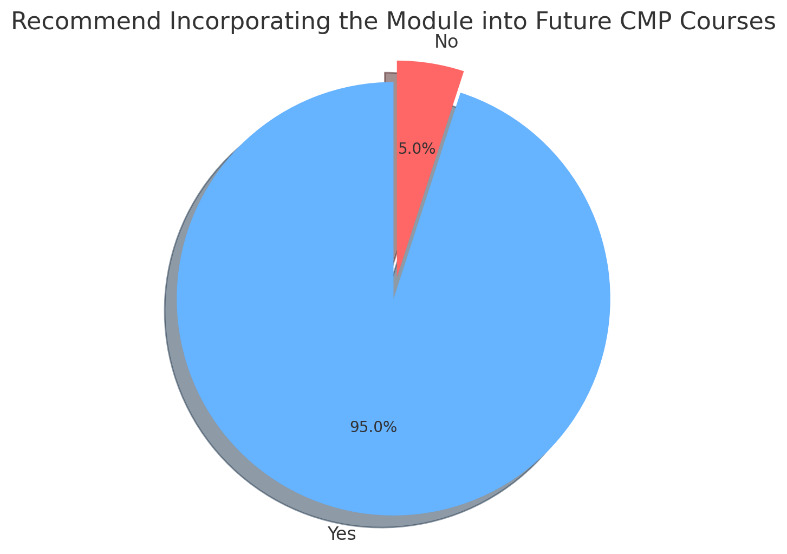

The final survey question asked students if they would recommend incorporating the DARA tool into a future course. Almost all students (38 of 40, or 95%) indicated ‘Yes’, while two students indicated ‘No’ to the question. One student did not respond.

Conclusion

Findings support that GenAI tools have the potential to enhance student engagement and dissertation development success in graduate learning. Feedback from graduate DPA and DCJ students indicated that the DARA tool was quite helpful in several ways. This included that the module helped students in developing and/or aligning their problem, purpose, and research question[s], with it being most helpful in defining the research problem. In addition, a strong majority of students indicated that use of the DARA tool was more useful than traditional faculty feedback, and the real-time, interactive nature was a reason cited by several students. Potential future implications include enhancing current GenAI learning capacity by the further cultivation of student engagement and success and maximization of student learning experiences for enhanced feedback and dissertation research progress. Recommended next steps include incorporating the module and survey into the CMP course itself to gain further feedback and insights. Also, piloting the tool in the DIS-9901 course may prove useful for students who are just beginning the formal dissertation process.